NVIDIA NeMo Megatron & Large Language Models

This release features new techniques powered by NVIDIA Research breakthroughs that deliver more than 30% faster training times for GPT-3 models. NeMo Megatron is an end-to-end framework from data curation, to training, to inference, to evaluation.

Introduction

NVIDIA announced the latest version of the NeMo Megatron Large Language Model (LLM) framework.

The release features new techniques powered by NVIDIA Research, that deliver more than 30% faster training times for GPT-3 models.

Large Language Models contain up to trillions of parameters to enable learning from text.

These new updates to NeMo Megatron include a new hyper-parameter tool to optimise training and deployment, providing greater accessibility for industries adopting LLMs for new AI-powered services.

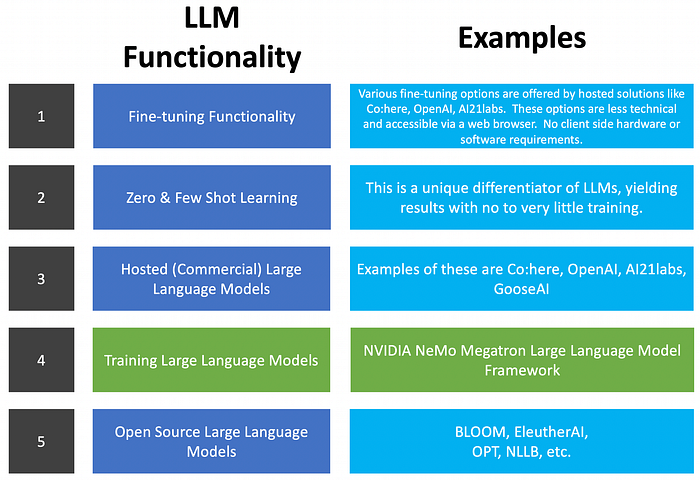

Large Language Models & Associated Functionality

LLM and related technologies can be broken down into five basic categories, as seen below...

Starting at number five…

5️⃣ There are a number of open-source LLMs available. These include BLOOM and others. There are a few challenges with these open-source models…

Hosting and processor costs need to be considered. Latency, when regional and geographic dispersed installations are not available. There are other avenues available, like using the inference API from 🤗Hugging Face at minimal cost when starting out.

Another example is Goose AI’s commercial offering backed by Eleuther.AI which is a group of researchers working on LLMs, also releasing their models publicly.

4️⃣ This is where NVIDIA really comes into its own. With the latest version of NVIDIA NeMo Megatron Large Language Model (LLM) framework, organisations can achieve more than 30% faster training times for GPT-3 models. There is an opportunity for companies to offer customised GPT-3 models as a service. For instance, an enterprise can create a fully customised and industry specific LLM.

3️⃣ The hosted commercial Large Language Models (LLMs) have received much attention of late, with Co:here, OpenAI and AI21Labs being the big commercial offerings.

There are also solutions like Botpress’ OpenBook that leverages large language models in order to bootstrap a chatbot implementation. And recently I have written about and shared an architecture for bootstrapping a chatbot with LLMs.

Considering the pressures mentioned in points five and four, these companies will have to differentiate themselves on four fronts:

- Ease of access and adoption, a key aspect of this process are playgrounds.

- Simplified no-code to low-code fine-tuning and customisation with industry/sector specific data.

- Accurate, fine-tuneable semantic search and visual clustering.

- Cost and strategic market alliances.

Another big threat is smaller companies leveraging open-sourced models and making money out of services, hosting and development.

2️⃣ The zero and few shot learning capabilities of LLMs are astounding, especially with regard to two areas.

The one being completion, where a sentence or paragraph can be contextually completed (Generation). The second is a general, highly contextual chatbot.

1️⃣ Methods of fine-tuning can be a differentiating factor for the large commercial LLM offerings. I hasten to add that this development by NVIDIA might see the customisation of GPT3 models become easier and offered as a service by companies.

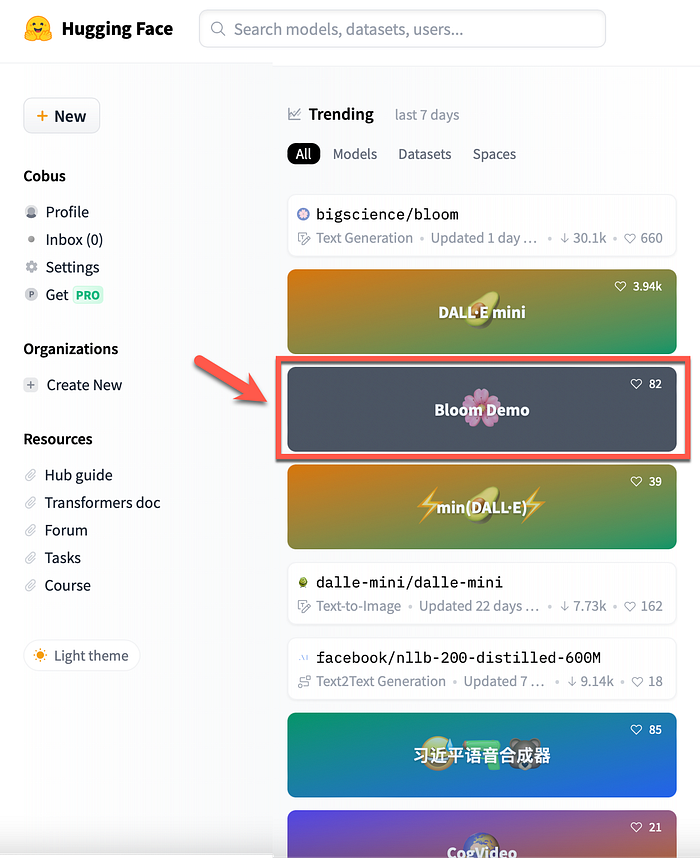

Bloom

Bloom is the world’s largest open-science, open-access multilingual large language model (LLM), with 176 billion parameters, was trained using the NVIDIA AI platform, with text generation in 46 languages.

The fact that large language models are being open-sourced and the high cost of accessing a number of commercial LLMs, begs the question…

Is it becoming easier and more viable for an increasing number of competitors to enter into the LLM services space? Where open-source LLMs are leveraged, by hosting the models and making it available as a service?

And how sustainable are some of the current, more expensive LLM offerings? Are the differentiators worth the expense?

Especially considering the technology involved in training BLOOM, and the pivotal role NVIDIA played in it, and future possibilities?

Besides trying to break the stranglehold of big firms on large language models, BLOOM aims to address the biases that large language models inherit from the datasets they train on.

Conclusion

We live in exciting times, where we are seeing an expansion in the field of language technology, especially with large language models becoming commercially and generally available.

When it comes to Conversational AI, currently there is a dire need for laser focus on robust and meaningful customer service, user experience, and user bot/skill orchestration. Hence the importance of customised/fine-tuned training.

NeMo Megatron is a quick, efficient, and easy-to-use end-to-end containerised framework for collecting data, training large-scale models, evaluating models against industry-standard benchmarks, and for inference with state-of-the-art latency and throughput performance.

It makes LLM training and inference easy and reproducible on a wide range of GPU cluster configurations.

Currently, these capabilities are available to early access customers to run on NVIDIA DGX SuperPODs, and NVIDIA DGX Foundry. Support for other cloud platforms is coming shortly.

Also, the features can be tried out on NVIDIA LaunchPad, a free program that provides users short-term access to a catalog of hands-on labs on NVIDIA-accelerated infrastructure.

This development dovetails well with NVIDIA Riva and seemingly NVIDIA want to solve ailments in Conversational AI, on a larger scale.