Recently Yellow.ai Announced DynamicNLP For Chatbot Development

DynamicNLP is an innovative way to bootstrap intent development for a digital assistant.

I ‘m a deeply curious person, so when I see new technology like DynamicNLP that seems to solve previous problems with a novel approach, I can’t help trying to reverse-engineer it. 🙂 I’d love to hear from the Yellow.ai folks how close/far it is from the actual internals!

I also discuss the guidance of Gartner in terms of successful chatbot deployment, the fact that synthetic data can suffice for bootstrapping to some degree, and the importance of using real customer conversations.

The TL;DR

- Recently Yellow.ai announced DynamicNLP as a zero-shot learning approach to chatbot development, negating the need for training data or even training examples…

- DynamicNLP is most probably leveraging Large Language Model (LLMs) generative models. More detail on this later in the article.

- DynamicNLP leverages intent names (at least 3 words) and one training sentence to create training data in the form of generated possible user utterances.

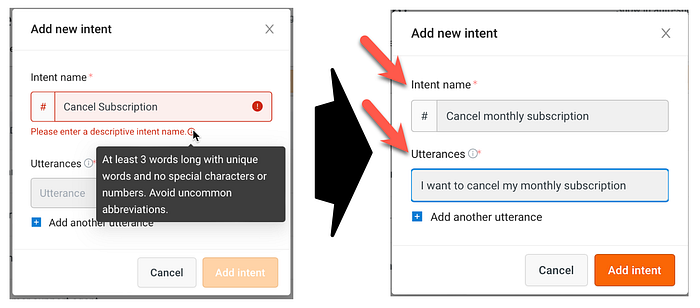

- Clear guidance is given for intent names to fully leverage DynamicNLP.

- From this data, semantically similar responses are generated using a LLM generative model for each user defined intent. Based on the intent name which must be a three word descriptive phrase, and the one training example sentence. Both supplied by the user.

- User input is most probably matched to a semantically similar cluster (an intent) based on semantic search.

- Once the user input successfully matched to an intent, this in turn becomes a positive feedback loop to add to the generated responses.

- DynamicNLP is most probably limited to the English langauge at this stage.

- DynamicNLP should be seen as a method for bootstrapping a chatbot; and not a long term solution.

- Other avenues of bootstrapping are Search, and other implementations of LLMs.

Please follow me on LinkedIn for updates on Conversational AI and more. 🙂

As seen above, it does seem like product or service specific data comes through from time to time. This makes me think that there’s a chance DynamicNLP is just embedding the intent name and doing a nearest neighbour search without generating.

How Does DynamicNLP Work?

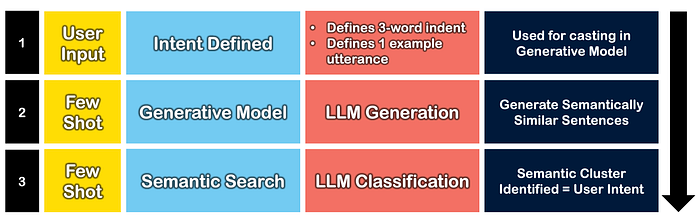

The most probable sequence of events which constitutes DynamicNLP is defined in the diagram below…step one and two are performed during build. Step three is performed during run-time.

Step 1 — Intent Defined (Build Phase)

I would argue that DynamicNLP is not zero shot learning, but few shot learning. The reason I say this, is that when an Intent is defined, the few shot training data is supplied by the user in the following way…

- An intent name of three descriptive words, sans abbreviations or numbers.

- An utterance example sentence for the intent, as seen below in the diagram.

Step 2 — Generative Model (Build Phase)

Below is an example where the Cohere generative LLM is used to generate similar sentences.

This is done via casting…in the code snippet below, you see the training data. The intent name is “lost card replacement” and the single example user utterance is “How can I replace my card?”

This program generates similar sentences.

--

Sentence: Open new account

Sentence: How can I open a new account?

Sentence: What is the procedure to open a new account?

Sentence: new accounts please...

--

Sentence: lost card replacement

Sentence: How can I replace my card?After the generative model is run with the training data above, the 12 sentences below are generated. You can see these sentences are semantically similar.

Sentence: I want to replace my card.

Sentence: Do you have a replacement?

Sentence: a replacement that is the same as my card

Sentence: How do I replace my card?

Sentence: I lost my card, what should I do?

Sentence: what should I do if I lose my card?

Sentence: if you lose your card, what should you do?

Sentence: I lost my card, what should I do?

Sentence: lost card replacement.

Sentence: How can I replace my card?

Sentence: I lost my card, what should I do?

Sentence: what should I do if I lose my card?Below is a screen print from the Yellow.ai UI with the three word intent name, the training utterance example and the generated user utterances. It mirrors the LLM example just explained from Cohere.

Please follow me on LinkedIn for updates on Conversational AI and more. 🙂

Step 3 — Semantic Search (Run-Time)

At runtime, when the user enters a sentence, LLM powered semantic search can be used to find the appropriate intent. In essence an intent is merely a cluster of semantically similar sentences.

Below is an example from the Cohere playground where their embeddings are leveraged to create clusters from the sentences.

In Conclusion

- Gartner states that customer conversations should guide and initiate any chatbot development process prior to chatbot development framework technology choices.

- Gartner also advises that real customer conversations should be used to create semantically similar clusters (intents).

- Business intents should then align with user intents.

- DynamicNLP starts with the user defining intents. However, this can serve as a starting point to collect real-world utterance data.

- DynamicNLP can serve well to bootstrap a chatbot, but ideally real-world user utterances should define intents as soon as possible.

Please follow me on LinkedIn for the updates on Conversational AI and more. 🙂

I’m currently the Chief Evangelist @ HumanFirst. I explore and write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces and more.