Retrieval Augmented Generation (RAG) Safeguards Against LLM Hallucination

A contextual reference increases LLM response accuracy and negates hallucination. In this article are a few practical examples to illustrate how explicit and relevant context should be part of prompt engineering.

I’m currently the Chief Evangelist @ HumanFirst. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.

The Retrieval Augmented Generation (RAG) feature of LLM systems allows businesses to utilise their own data for generating responses.

This technique enables in-context learning without costly fine-tuning, making the use of LLMs more cost-efficient.

By leveraging RAG, companies can use the same model to process and generate responses based on new data, while being able to customise their solution and maintain data relevance.

On the contrary, without RAG, models may return incorrect knowledge as they are trained on a broader range of data, and more intensive training resources are required for fine-tuning.

Thus, RAG allows organisations to optimise the integration of LLMs while gaining various benefits such as fact-checking components, up to date data and business-specific data. Hence circumnavigating the problem of a LLM model being trained and frozen in time.

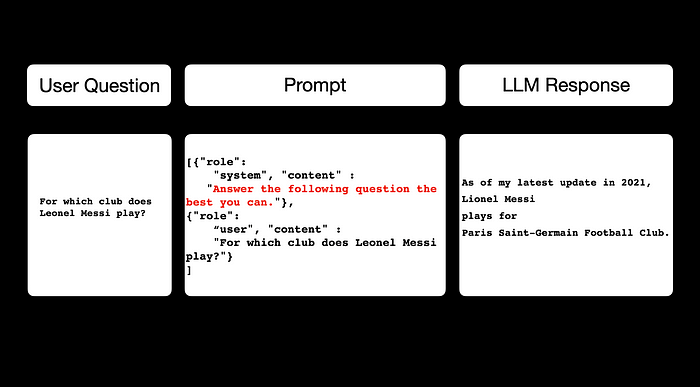

The image below shows a straight forward query being posed to gpt-4–0613:with the question For which club does Leonel Messi Play?

A caveat is included by the model with a dated answer.

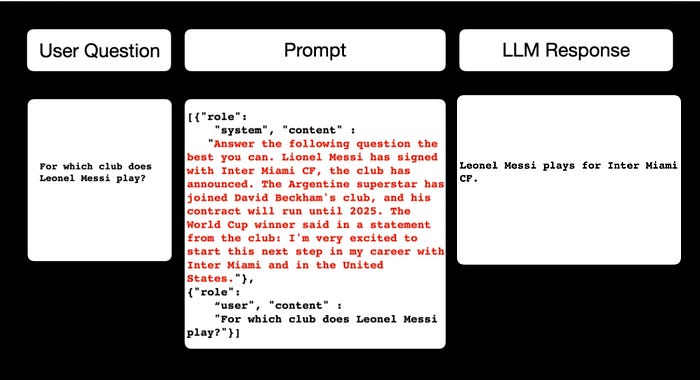

Considering the image below, the RAG approach is taken, where, shown in red, a contextual reference is included in the prompt, and the model responds with the correct answer in this instance.

Below is the full code to run the example, first without the RAG approach:

pip install openai

import openai

COMPLETIONS_MODEL = "gpt-4"

openai.api_key = ("xxxxxxxxxxxxxxxxxxxxxxxxxxx")

completion = openai.ChatCompletion.create(

model="gpt-4-0613",

messages = [{"role": "system", "content" : "Answer the follwing question the best you can."},

{"role": "user", "content" : "For which club does Leonel Messi play?"}

]

)

print(completion)And the answer received is:

As of my latest update in 2021, Lionel Messi plays for Paris Saint-Germain Football Club.

{

"id": "chatcmpl-7dV0qcHEUcl7ffvId0UCfFxhuq6eQ",

"object": "chat.completion",

"created": 1689648360,

"model": "gpt-4-0613",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "As of my latest update in 2021, Lionel Messi plays for Paris Saint-Germain Football Club."

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 30,

"completion_tokens": 22,

"total_tokens": 52

}

}And then supplying the contextual reference in the prompt:

completion = openai.ChatCompletion.create(

model="gpt-4-0613",

messages = [{"role": "system", "content" : "Answer the follwing question the best you can. Lionel Messi has signed with Inter Miami CF, the club has announced. The Argentine superstar has joined David Beckham's club, and his contract will run until 2025. The World Cup winner said in a statement from the club: I'm very excited to start this next step in my career with Inter Miami and in the United States."},

{"role": "user", "content" : "For which club does Leonel Messi play?"}

]

)

print(completion)With the correct contextual answer: Leonel Messi plays for Inter Miami CF.

{

"id": "chatcmpl-7dV7lQYwmtdh4IDRKcjGFfcHK0dB0",

"object": "chat.completion",

"created": 1689648789,

"model": "gpt-4-0613",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Leonel Messi plays for Inter Miami CF."

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 97,

"completion_tokens": 9,

"total_tokens": 106

}

}The challenge of course is including the correct amount of context, at the right time, at scale.

A Machine Learning pipeline approach needs to be taken when compiling the prompt in real time. While being able to measure and enhance the RAG workflow and testing elements like:

- Data Generation

- Automatic prompt creation

- Observe, inspect and optimise prompt evaluation metrics, etc.

⭐️ Follow me on LinkedIn for updates on Conversational AI ⭐️

I’m currently the Chief Evangelist @ HumanFirst. I explore & write about all things at the intersection of AI & language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.