Nuance Mix, Conversational AI & Dialog Development

And What Innovations & Points Of Convergence Are Surfacing

Introduction

This article considers 15 general trends and observations from many of the Gartner and IDC leaders.

To read more on all the new developments and releases pertaining to IBM Watson Assistant, take a look here.

The balance of the article will focus on the dialog state development and management of Nuance Mix.

Firstly, General Conversational AI Trends & Observations

There are a few general trends and observations taking shape amongst the leading complete Conversational AI platforms…

- Structure is being built into intents in the form of hierarchal, or nested intents (HumanFirst & Cognigy). Intents can be switched on and off, weights can be added. Thresholds are set per intent for relevance, a threshold can in some cases be set for a disambiguation prompt. Sub-patterns within intents (see Kore.ai). Kore.ai also has sub-intents and follow-up intents.

- Specific intents are linked to specific sections of the flow, and intents & entities are closely linked; thus creating a strong association between intents and specific entities.

- Intents can be dragged, dropped, nested, un-nested (HumanFirst).

- The proliferation of a design-canvas approach to dialog management. Dialog-management supports (in general) digression, disambiguation, multi-modality, with graphic design affordances. There is focus on voicebot integration and enablement. Currently there are four distinct approaches to dialog state management and development.

- The idea of maintaining context across intents and sections of different dialog flows are also being implemented. Especially making a FAQ journey relevant and accessible across journeys and intents.

- SaaS approach with local private cloud install options.

- Entities can be annotated within intents, compound entities can be defined. And structure is being introduced to entities. In the case of LUIS, machine learning nested entities. Or structure in sense of Regex, lists, relationships etc. Kore.ai has implemented the idea of user patterns for entities.

- Script or chatbot dialog prompt management is improving, this is very helpful in the case of multi-lingual conversational agents.

- Ease of use, with a non-technical end-to-end solution approach.

- Design & development are merging, probably contributing to the demise of a design tool like BotSociety.

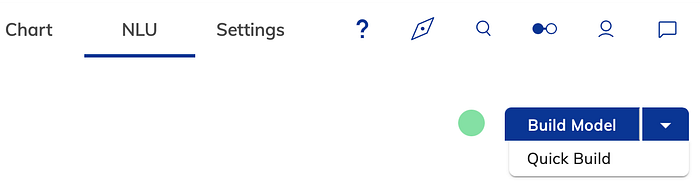

- Focus is lent to improving the training time, with solution being offered in the form of incremental training (Cognigy’s quick build, Rasa’s incremental training) or speeding up training time in general (IBM Watson Assistant)

- Irrelevance detection is receiving focus, from IBM Watson Assistant. It is also an element of the HumanFirst workbench.

- Negative patterns. These can be identified and eliminated. For instance, a user says “I was trying to Book a Flight when I faced an issue.” Though the machine identifies the intent as Book a Flight, that is not what the user wants to do. In such a case, defining was trying to as a negative pattern, would ensure that the matched intent is ignored. Kore.ai has this as a standard implementation.

- The idea of Traits is commonplace in Kore.ai. Traits are specific entities, attributes, or details that the users express in their conversations. The utterance may not directly convey any specific intent, but the traits present in the utterance are used in driving the intent detection and bot conversation flows.

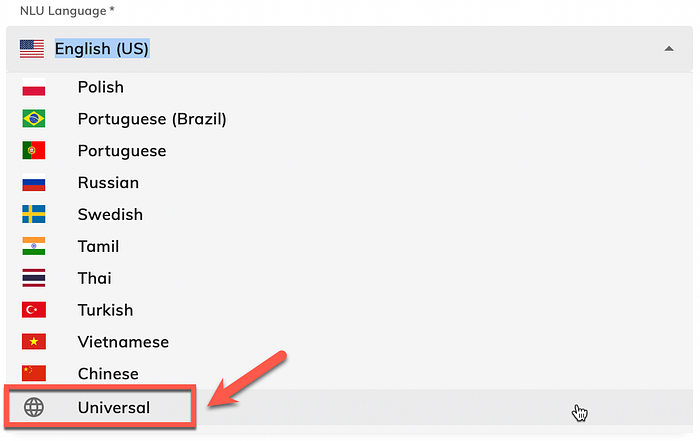

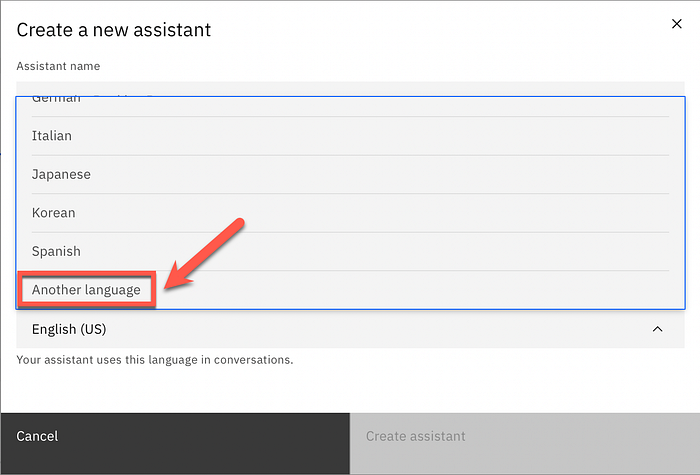

- Multiple languages are common-place. Generic, general language is also an option in some platforms like Cognigy and Watson Assistant. HumanFirst detects languages on the fly, in a very intuitive manner.

Nuance Mix Dialog Development & Management

On 4 March 2022 Microsoft made the announcement that the acquisition of Nuance has reached completion. Nuance has been seen by many as having missed the boat on Conversational AI. Commentators saw Nuance as marginalised as the veteran in an industry they should have dominated.

But this changed due to two reasons:

- The Microsoft acquisition

- Nuance Mix

February 2020 saw the beta release of Nuance Mix, and looking at their release notes, there has been a steady cadence of 2 to 3 releases per month. This level of activity and development speaks of continued value delivery and investment into an already astounding product.

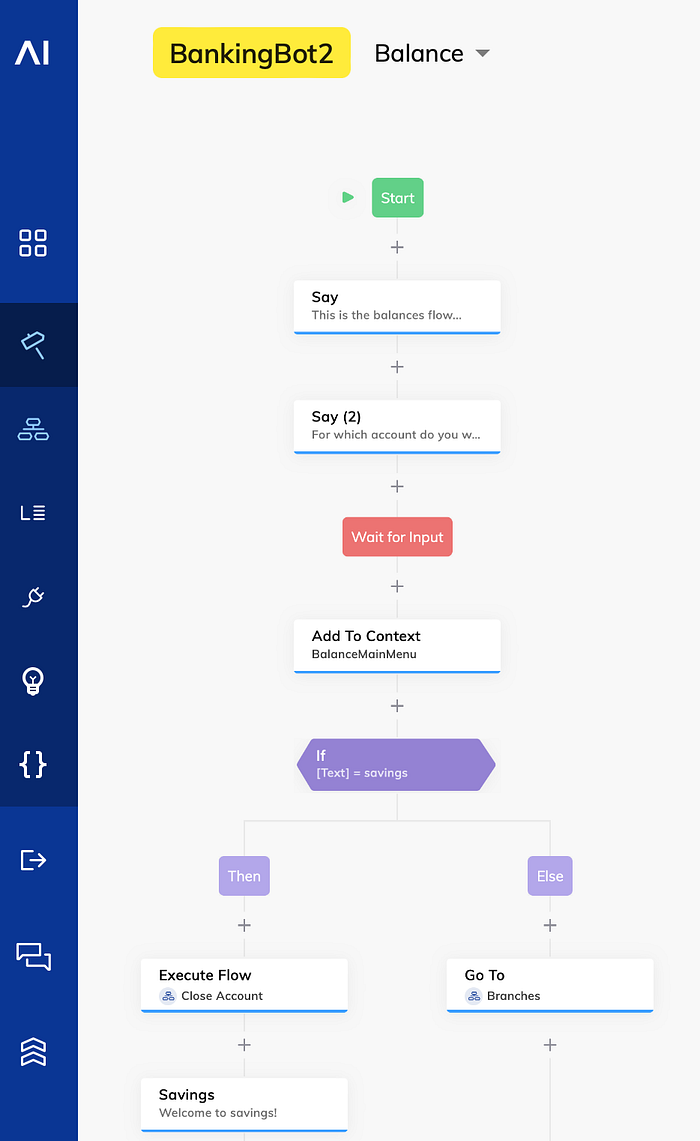

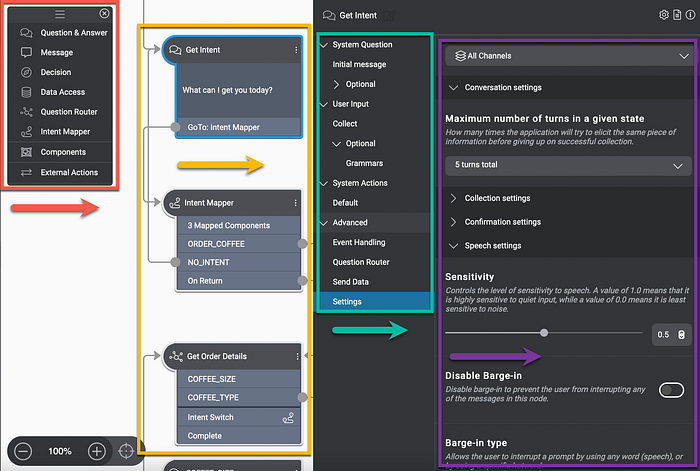

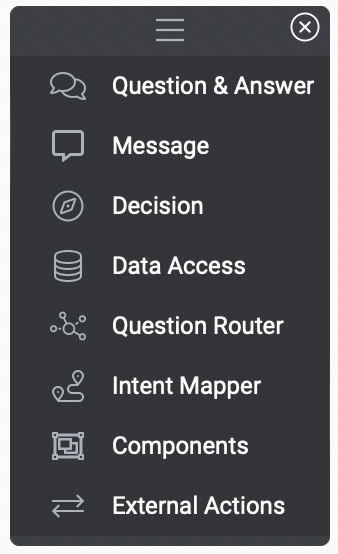

Nuance Mix implemented the idea of flow nodes, analogous to how Cognigy and other platforms does it. Channel, or medium management is very prominent with Mix. They seem to have the approach of develop once, deploy multiple times.

Digression is made possible with the Switch Intents option which allow users to switch intent within this flow. A node can be added to prompt for confirmation before switching. This guards against unintended digression.

A component call node affords the user the means to enter another component. Separate re-useable components are ideal for implementations of authentication flows, disambiguation sections, agent transfer routines , etc.

The component call node transfers the dialog flow to the specified component. When the component is finished, the dialog returns to the component call node and proceeds from there. This reminds of a sub-dialog or function call of sorts.

Users can click on a component node in the design canvas, to see its properties in the Node properties pane.

As seen above, the dialog development and management area can be divided into four areas. The first (red) being the dialog node elements. The second (yellow) the design canvas. Thirdly (green) properties menu and lastly (purple) the settings for each op the option settings.

Conclusion

In the coming days I will be doing more prototyping on Nuance Mix. Suffice to say, currently the interface feels a bit lean with limited affordances. This will truly be tested by complex implementations which will need to scale.

A few considerations on Nuance Mix’s strongpoints and a few possible areas of improvement…

The Good

- Nuance Mix Outline is coming soon. It is described as a process of creating multiple conversational paths with the ease of writing transcripts. These dialogs will be converted into a dialog tree or conversation. This is a potential game changer and a much needed differentiator for Nuance Mix. This could be a mix between Rasa ML stories and a dialog design canvas approach.

- Dialogs or flow messages to the user can be managed separately, this reminds much of Microsoft’s Composer dialog management.

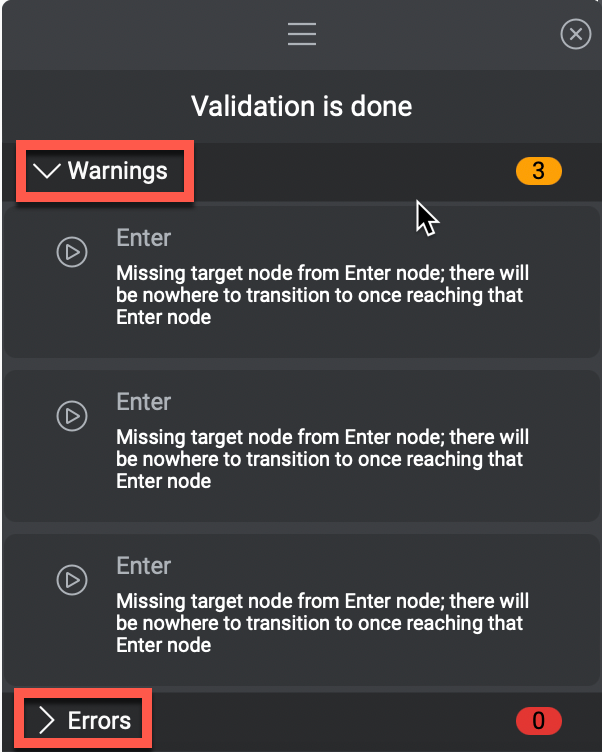

- Validation can be performed on the call flow, with Warnings & Errors. This can safe much time in debugging and troubleshooting.

- Channel views are available for testing, hence Nuance Mix can be used to combine different modalities into one design or voice application.

- Different components can be created. These components can be seen as segments of the conversation. Components can be linked via intents, and the conversation can digress from one component to the other.

- As with Cognigy, as the application is tested out via the test pane, the position or current state of the conversation is displayed within the dialog flow.

- The selection of available nodes might seem limited. But each node type has extensive settings. Hence having the approach of surfacing simplicity while having the complexity available under the hood if needed.

A Few Considerations

- The design and development canvas is not interactive, the menus and settings on the side need to be used. It does feel a bit debilitating to not be able to drag, drop and manipulate nodes in the canvas my moving them.

- The grey user interface is probably not ideal.

- Nuance’s strong points are definitely multi-modality, STT, TTS, speech enablement in general etc. There is definitely room for improvement with regards to the dialog tree interface.