Two Approaches To Irrelevance Detection In Chatbots

Comparing How IBM Watson Assistant & HumanFirst Handle Out-Of-Domain User Utterances…

Introduction

In general chatbots are designed and developed for a specific domain, apart from general assistants like Google Home, Alexa, Siri etc.

These domains are narrow and relevant to the organisation’s products, services etc.

As an added element to make the chatbot more interactive and lifelike, and to anthropomorphise the interface, small talk can be introduced. Also referred to as chitchat.

But what happens if a user utterance falls outside this narrow domain? With most implementations the highest scoring intent is assigned to the users utterance, in a frantic attempt to field the query. Or the top three returned intents are used to create a disambiguation menu.

Would the ideal not be for the chatbot to tell the user a request is out of domain. In a nice way. 🙂

This article takes a look at how IBM Watson Assistant does it, and then how HumanFirst approaches this challenge…

First, An Update On All Things Watson Assistant Related

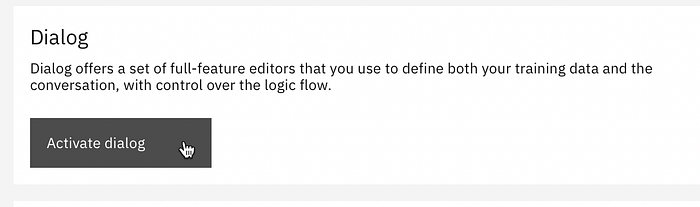

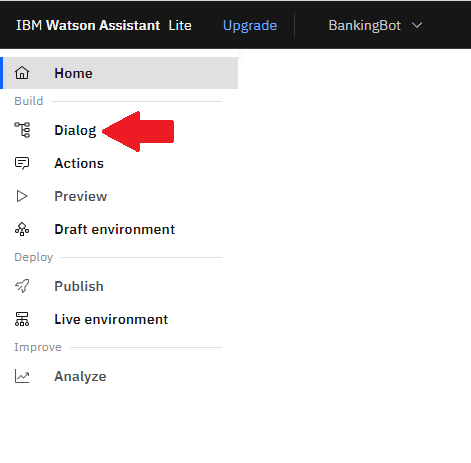

- On 5 April 2022 Dialog Skills were enabled in the new Watson Assistant The dialog feature is available in the new Watson Assistant experience. If you have a Dialog Skill based assistant that was built using the classic Watson Assistant, you can now migrate your dialog skill to the new Watson Assistant experience.

- This is a significant step forward in bolstering their new interface and experience. Watsons Assistant’s dialog management approach within Dialog Skills is really unique. In the sense that it is very much a simplified approach with state machine like interface.

- IBM is continuously adding new functionality to Action Skills, like enhanced debugging and variable handing, user response flexibility, dynamic URL’s, improved fine-tuning, disambiguation, digression, and focus on web deployment.

- Action Skills can be used in a stand-alone mode and can indeed constitute a complete chatbot. Action Skills can also be used as sub-dialogs or extensions to dialog skills. This is the true power of Action Skills.

- Users can revert back to the old experience should they wish to do so. It is quite fast and efficient to toggle between the two experiences, should you have active workspaces in both.

- IBM is executing well on their strategy, there were some unfounded doubts. Their strategy is unfolding in a number of ways, and it is easy to miss it as many changes are intuitive. For instance, irrelevance detection is still available, as seen in the testing pane. But is orchestrated under the hood, and the toggle option is removed form dialog settings.

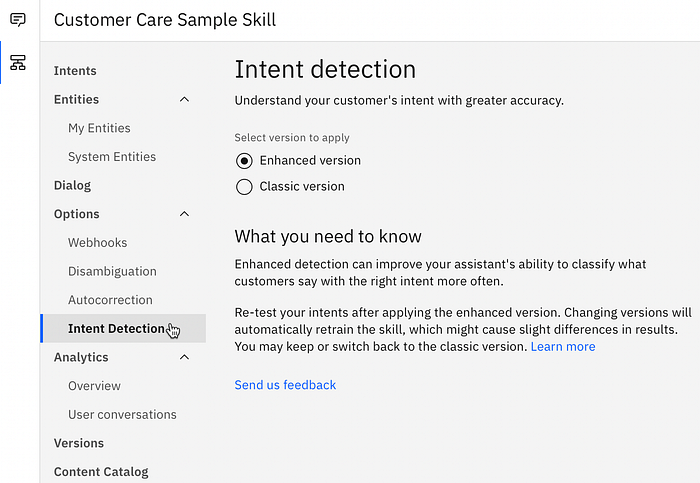

- Watson Assistant has a very steady cadence of new releases, including enhancements on irrelevance detection, fuzzy matching, spelling autocorrection and web chat focused enhancements.

- IBM Cloud is not following the lead of Kore AI, Cognigy and Nuance Mix with full functionality access sans a credit card. This approach by the three platforms is a significant enabler. IBM Cloud does have 40 service available for free. Of which Watson Assistant Lite is one, but you need to supply credit card details to register an account.

- IBM Watson Assistant does not have incremental training of the NLU model like other platforms, but they have significantly shortened their training time, which is more efficient in the long run and simplifies matters.

- There is user example recommendations, which makes it easier to identify new phrasing for your intents. Watson Assistant will recommend new user examples.

- Watson Assistant has not yet added any hierarchical structure or functionality to their intents. Intent examples can be annotated and WA tokenizes the utterances for easy annotation.

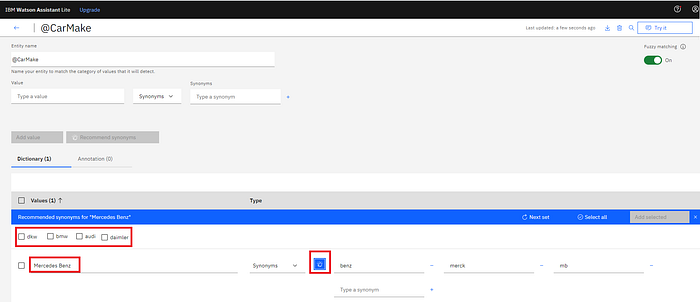

- Entities also does not have much structure, but for synonym generation, fuzzy matching etc.

Irrelevance Detection In IBM Watson Assistant

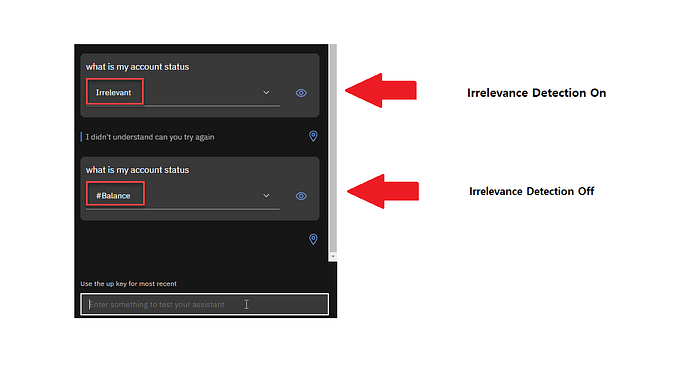

On 7 November 2019 irrelevance detection was added to Watson Assistant. When enabled, a supplemental model is used to help identify utterances that are irrelevant and should not be answered by the dialog skill.

There are two ways to define an utterance as irrelevant, the one is via the test pane, as seen below…

The other and perhaps more efficient way is to add irrelevance or counter examples via the JSON payload.

{

"intents": [

{

"intent": "Cancel",

"examples": [

{

"text": "cancel that"

},

{

"text": "cancel the request"

},

{

"text": "forget it"

},

{

"text": "i changed my mind"

},

{

"text": "i don't want a table anymore anymore"

},

{

"text": "never mind"

},

{

"text": "nevermind"

}

],

"description": "Cancel the current request"

}Above, is how an intent is defined within a Dialog Skill JSON payload.

"counterexamples": [

{

"text": "Africa is a content"

},

{

"text": "I need to sleep now."

},

{

"text": "I want to jump into the pool"

}

],And here (above) is the utterances for irrelevance or counter example training.

A few key considerations:

- Irrelevance detection is a key and distinguishing feature of any Conversational AI system. Enhancements on it is key and this development is surely a good sign.

- This feature is available in both Dialog Skills & Action Skills, both in the old and the new experience.

Utterances with an assigned intent can be marked as irrelevant and saved as counter examples in the workspace. And hence included as part of the training data. This teaches the chatbot to explicitly not answer utterances of this nature.

The HumanFirst Approach

HumanFirst has interface options available in the form of:

- Web based UI

- API Interface

- CLI

- Pipelines

Here we will only focus on the web UI in general, and intents in specific.

Develop For User Input, & Not For What Is Relevant To Pre-Designed Journeys

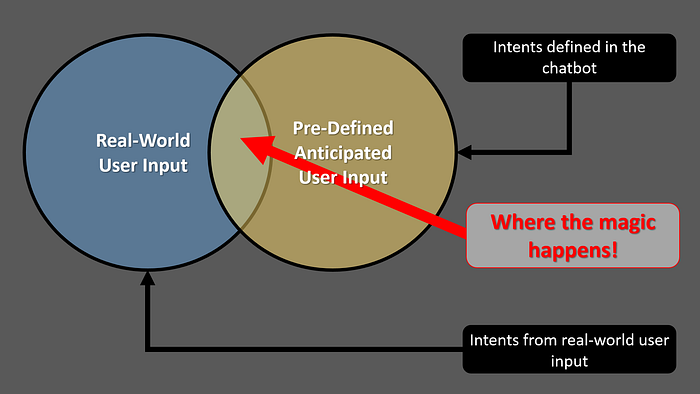

The HumanFirst approach is to take any customer utterances or conversations, and generate intents from those utterances, automatically. A user can set granularity and cluster size, and HumanFirst auto-detect groups of utterances. This is with completely raw data, with no annotation, no predefined tags etc. And no predefined entities!

The intersection of the Venn diagram is where the chatbot is effective, this overlap needs to be grown to such an extend that the two circles largely merge.

The aim of HumanFirst is to not have makers start with pre-defined journeys and intents, and continuously retrofit those on actual customer behaviour.

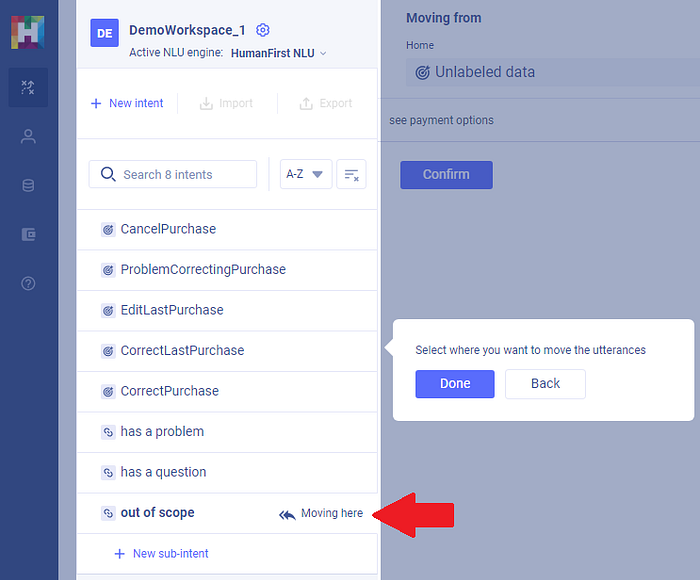

But rather work from customer experiences forward. Below you see that an utterance which is unlabeled, can be moved to the out of scope intent. Notice the icon is different. Out of scope plays a role in the process of training the model.

HumanFirst does not require formatted data from specific NLU solution, and it is not solely a tool of analyzing & exported data from NLU solutions. Raw user utterances & conversations are organized in seconds and irrelevance from utterances can quickly be detected.

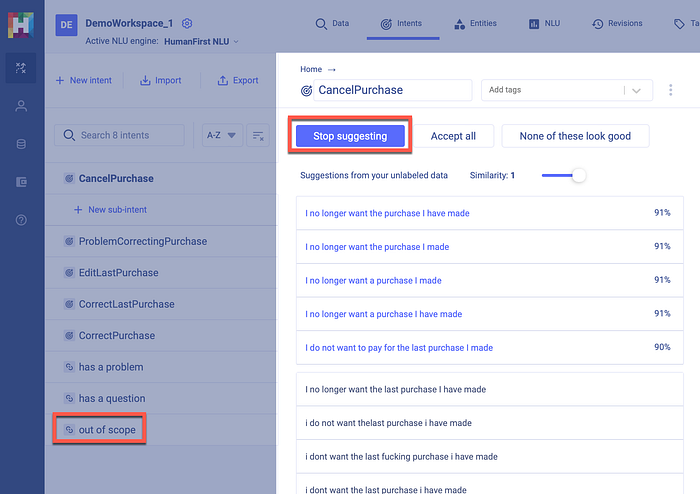

Whilst setting similarity, suggestions are made from utterance data and these suggestions which are scored, can be added to an intent, or moved to out of scope.

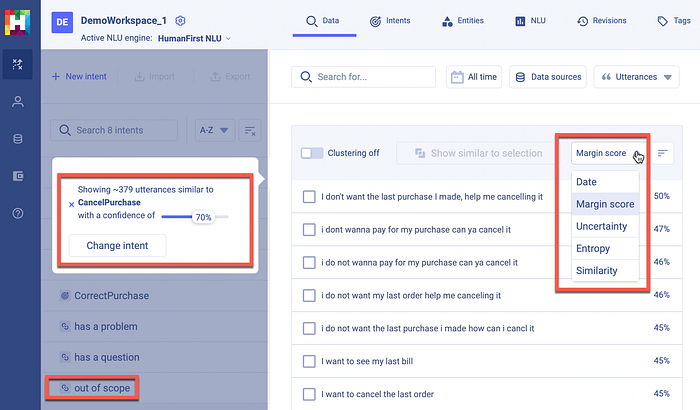

Utterances associated with an intent can be organized according to Date, Margin Score, Uncertainty, Entropy or Similarity.

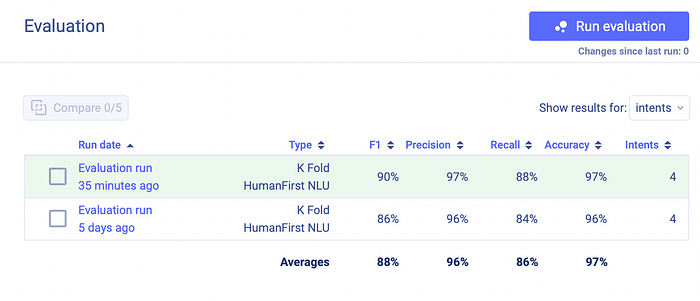

Lastly, the newly trained model can be compared to previous models based on five metrics.

To read more about the meaning of the metrics, below is a very good explanation.

Conclusion

There is a definite place for Intent-Driven Development, and out-of-scope or irrelevance can be defined from the onset. This is where user utterances and conversations dictate the journeys which are available.

There are exceptions of course. For instance, in the case of new products & services, the bot might need to pro-actively suggested or prompt the user. Obviously introducing something new to the user which they were not aware of.