What Does The OpenAI 16K Context Window Mean?

OpenAI launched a new model called gpt-3.5-turbo-16k, which means you can submit a document to OpenAI of 16,000 words in one go.

I’m currently the Chief Evangelist @ HumanFirst. I explore and write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces and more.

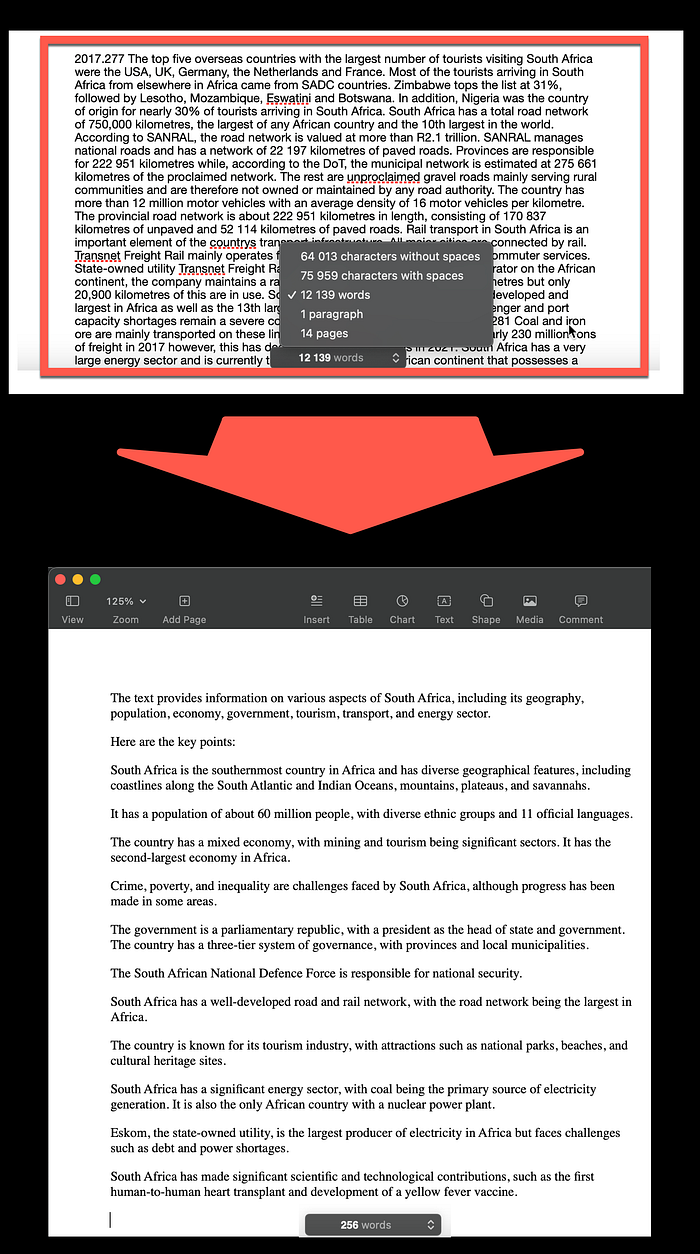

In the image below, is an extract of a 12,139 words, 14 pages document which I submitted to the new OpenAI model, with the result at the bottom:

I found it intriguing that the GPT-3.5-Turbo-16k model is a chat-only model and is not supported by the Completions Endpoint. The following is the error message I received:

InvalidRequestError: This is a chat model and not supported in the v1/completions endpoint. Did you mean to use v1/chat/completions?

Given this, I had to choose the chat endpoint and the ChatML notation for input.

As an example, below is a working Python application accessing the 16k model:

import os

import openai

openai.api_key = "xxxxxxxxxxxxxxxxxxxx"completion = openai.ChatCompletion.create(

model="gpt-3.5-turbo-16k",

messages = [{"role": "system", "content" : "You are a chatbot which can search text and provide a summarised answer."},

{"role": "user", "content" : "How are you?"},

{"role": "assistant", "content" : "I am doing well"},

{"role": "user", "content" : "What is the distance between New York and Montreal?"}

]

)

print(completion)When I attempted to submit a document which exceeded the maximum context window, I received an informative error message detailing what was possible from a context window perspective:

InvalidRequestError: This model’s maximum context length is 16385 tokens. However, your messages resulted in 18108 tokens. Please reduce the length of the messages.

16k context means the model can now support ~20 pages of text in a single request.

- OpenAI

The OpenAI model has alleviated one of the long-running ailments of LLMs, the limited context window size, which necessitates pre-processing of data.

The sheer context that this model can manage is both astounding and remarkable, with speeds and accuracy that make it an invaluable development.

With 256 words for a one sentence summary and 12 key points, the summary clearly details the significance of this new progress.

⭐️ Please follow me on LinkedIn for updates on Conversational AI ⭐️

I’m currently the Chief Evangelist @ HumanFirst. I explore and write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces and more.