Enable Your Chatbot To Disambiguate Intelligently With Automatic Learning

Disambiguation Is Part Of Natural Human Conversation

Introduction

Your Chatbot Must Be Enabled For Disambiguation

Instead of defaulting to an intent with the highest confidence, the chatbot should check the confidence score of the top 5 matches.

If these scores are close to each-other, it shows your chatbot is actually of the opinion that not a single intent will address the query. And a selection must be made from a few options.

Here, disambiguation allows the chatbot to request clarification from the user.

A list of related options should be pretested to the user, allowing the user to disambiguate the dialog by selecting the best suited option from the list.

But, the list presented should be relevant to the context of the utterance; hence only contextual options must be presented.

Disambiguation enables chatbots to request help from the user when more than one dialog node might apply to the user’s query.

Instead of assigning the best guess intent to the user’s input, the chatbot can create a collection of top nodes and present them. In this case the decision when there is ambiguity, is deferred to the user.

What is really a win-win situation is when the feedback from the user can be used to improve your NLU model via automatic learning; as this is invaluable training data vetted by the user.

Disambiguation can be triggered when the confidence scores of the runner-up intents, that are detected in the user input, are close in value to the top intent.

Hence there is no clear separation and certainty.

There should of course be a “non of the above” option, if a user selects this, a real-time live agent handover can be performed, or a call-back can be scheduled. Or, a broader set of option can be presented.

Introducing Automatic Learning

In order to augment disambiguation, on 19 August 2020 IBM Watson Assistant launched autolearning.

The tagline from IBM is, Empower your skill to learn automatically with autolearning

.This sounds very promising, and is indeed a step in the right direction.

The big question of course is to what extend it learns automatically.

After building a prototype I have learned the following:

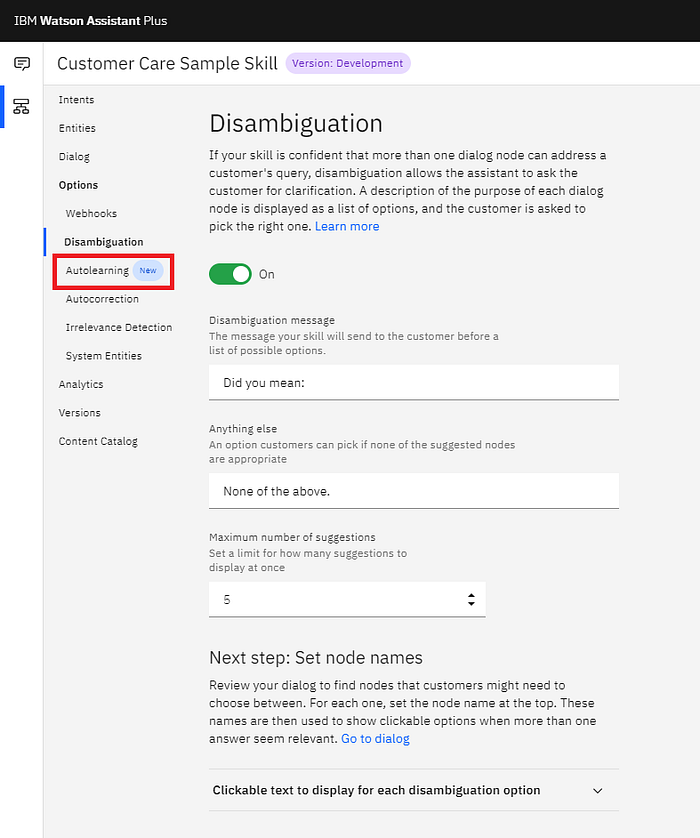

- It only works when disambiguation is activated and in use.

- Disambiguation relies on a menu.

- The menu sequence is adjusted based on popularity.

- No changes are made to your dialog, entities or intents.

- New utterances show up in Intent Recommendations.

Lending Structure To A Conversation

The ideal chatbot conversation is just that, conversation-like, in natural language and highly unstructured. When the conversation is not gaining traction, it does make sense to introduce a form of structure.

This form of structure is ideally:

- A short menu of 3 to 4 items presented to the user.

- With Menu Items contextually linked to the context of the last dialog.

- Acting to disambiguate the general context.

- And an option for the user to establish undetected context.

Once the context is confirmed by the user, the structure can be removed from the conversation. Where the conversation can then ensue unstructured with natural language.

The brief introduction of structure is merely there as a mechanism to further the dialog. This serves as a remedy against fallback proliferation.

The idea behind autolearning is to order these disambiguation menus according to use or user popularity.

More On Autolearning

In short, your chatbot learns from interactions between your customers and your assistants.

Autolearning uses observations of actions that are made by only your customers to improve only your skills.

Example Of Disambiguation Using IBM Watson Assistant

Autolearning improves the quality of your skill over time. It applies insights that it gains from observing customer interactions to help the skill identify and surface correct answers more often.

The underlying mechanics of autolearning is not visible or tweakable.

This speaks to the general approach of moving complexity away from the user and presenting a simpler UI.

You can use the Customer Effort Notebook to analyze how Autolearning is Improving the user experience.

A practical example:

When a customer asks a question that the assistant isn’t sure it understands, the assistant often shows a list of topics to the customer and asks the customer to choose the right one.

This process is called disambiguation.

If, when a similar list of options is shown, customers most often click the same one option #2, for example), then your skill can learn from that experience.

It can learn that option #2 is the best answer to that type of question. And next time, it can list option #2 as the first choice, so customers can get to it more quickly.

And, if the pattern persists over time, it can change its behavior even more. Instead of making the customer choose from a list of options at all, it can return option #2 as the answer immediately.

The premise of this feature is to improve the disambiguation process over time to such an extend, that eventually the correct option is presented to the user automatically. Hence the chatbot learns how to disambiguate on behalf of the user.

Building A Prototype

Within the skill menu there is an ever growing list of features to enhance your chatbot. Here you will find the autolearning option.

Your skill must be linked to an assistant and you are able to change this assistant. From here you can toggle autolearning on or off.

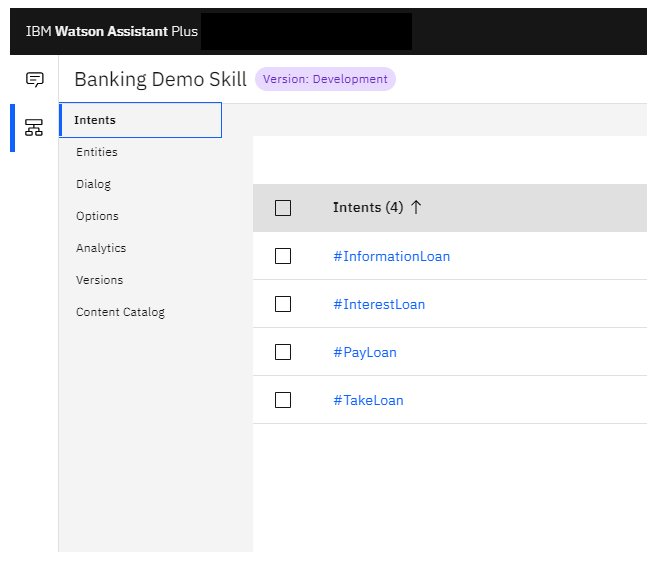

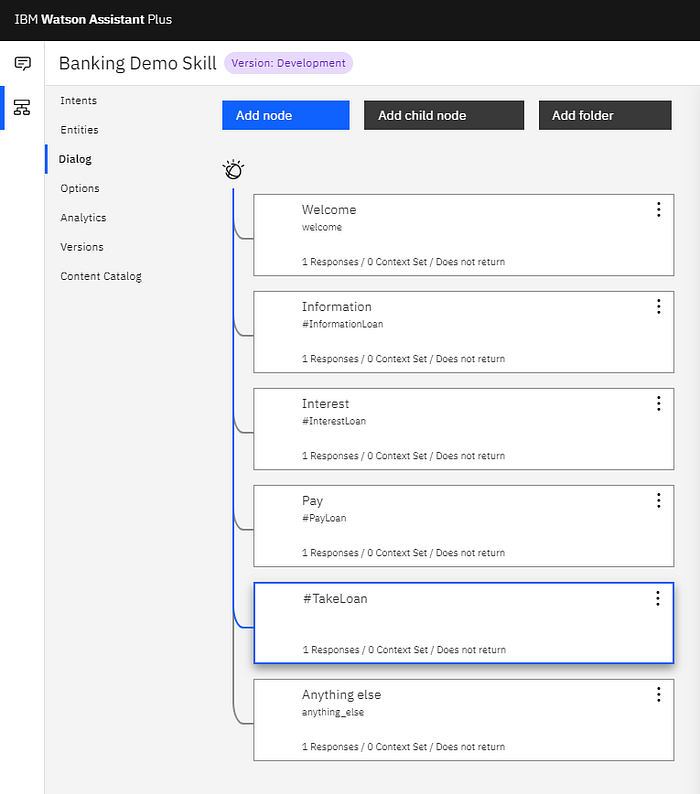

Firstly we create four simple intents, each of which is related to loans, but different aspects of loans.

The next step in the prototype is to create a dialog with conversational nodes linked to each of the intents.

The idea is that should one of the intents matched, the specific dialog of that intent is entered.

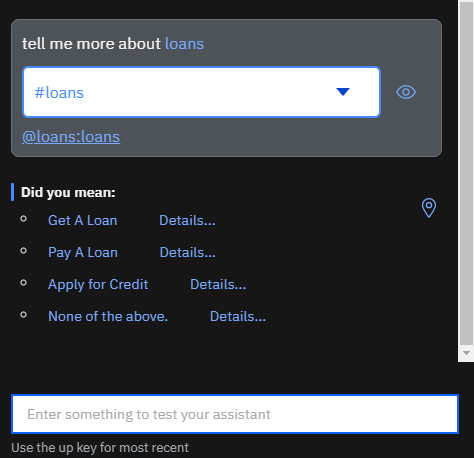

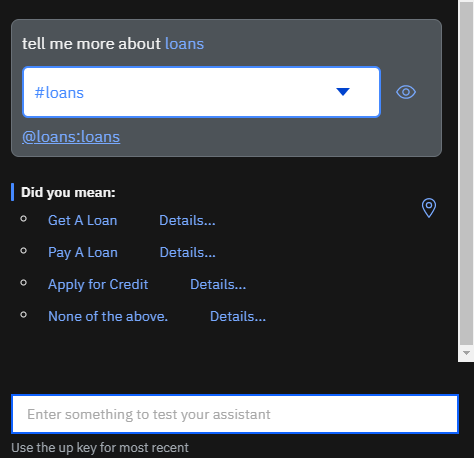

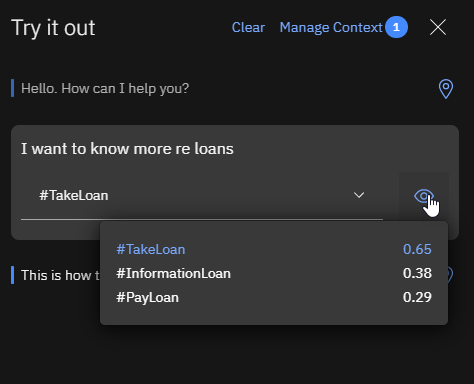

Here I enter the text “I want to know more re loans”. This is a very ambiguous statement, especially in the context of the intents defined. You can see the confidence of the top scoring intent is low.

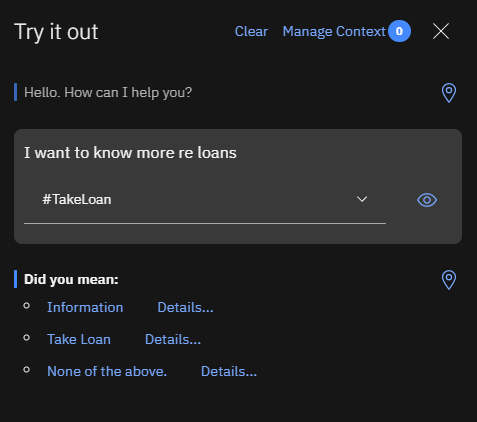

Here is the exact same user utterance. With the difference that from a chatbot perspective disambiguation is activated. So instead of defaulting to taking a loan, the chatbot tries to disambiguate the user utterance by presenting a menu with options.

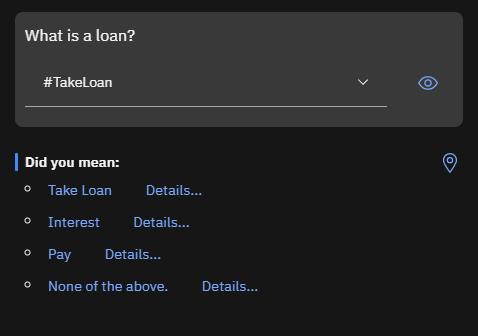

In this example you will see a more generic user utterance, and more menu items are presented in an attempt to resolve the ambiguity. This is done automatically by Watson Assistant.

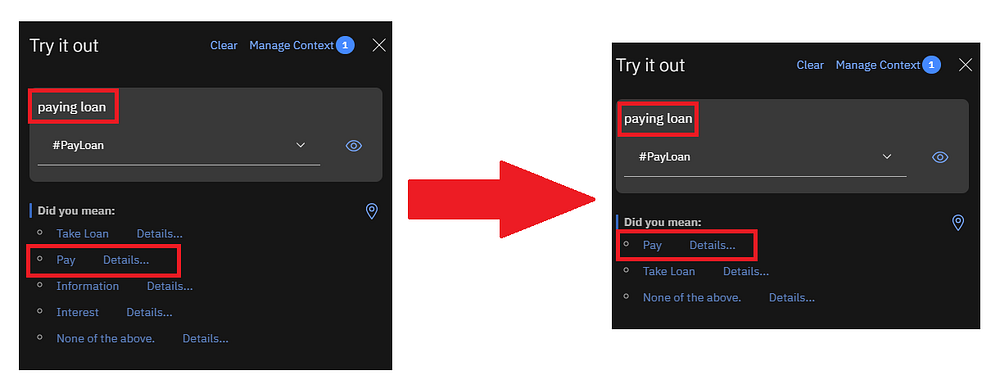

On the left, if the user utterance is paying loan, initially a disambiguation menu is presented with five options. The payment item is second on the list.

After entering the same utterance a few times, and selecting the Pay menu option, the presented menu started changing automatically.

The range presented to the user was narrowing, which is a good sign. And the Pay option moved to the top of the list.

It must be stated again that this is done automatically by Watson Assistant. The options of the disambiguation menu are dynamically and automatically compiled by Watson Assistant. And can be different on entering the same user utterance consecutively.

The same goes for disambiguation. It is performed under the hood and the end-game of this process is to eventually deprecate the disambiguation menu. And Watson Assistant will know what the right option is.

You can see this as the user training the chatbot to match the right intent to the right user utterance.

Conclusion

This new feature might not seem as spectacular as initially thought. However, it is a natural and incremental advancement of the Watson Assistant interface.

Current functionality and ways-of-work is not disrupted by this change.

The idea of disambiguation is augmented and enhanced with ordering the menu intelligently.

But more than that, it offers a safe avenue for continuous improvement via user actions.