Dealing With Compound User Intents In IBM Watson Assistant

Your Assistant Must Be Able To Respond to Multiple Intents In User Utterances

Introduction

In short the problem is…the user input is too long, with multiple requests in one sentence or utterance.

In essence compound intents…

The medium impacts the message, and in some mediums, like sms/text and messaging applications in the general, the user input might be shorter. Then, in mediums access via a keyboard or a browser, the user input is again longer.

The longer user input can have multiple sentences, with numerous user intents embedded in the text.

There can also be multiple entities. Users don’t always speak in single intent and entity utterances.

On the contrary, the users will speak in compound utterances. The moment these complex user utterances are thrown at the chatbot, the bot needs to play a game of single intent winner.

Which intent from the whole host of intents from the user is going to win this round of dialog turn?

But…what if the chatbot could detect, that it just received four sentences; the intent of the first one is weather tomorrow in Cape Town. The second sentence is the stock price for Apple, the third is an alarm for tomorrow morning etc.

Too ambitious you might think?

Not at all…very possible, doable and the tools to achieve this exist.

Best of all, many of these tools are opensource and free to use…but first…

A Simple Approach Using IBM Watson Assistant

Like all cloud based chatbot development environments, with Watson Assistant you can create a list of expected user intents.

These intents are categories to manage the conversation. Think of intents as the intention of the user contacting your chatbot. Intents can also be seen as verbs. The action the user wants to have performed.

Hence the user utterance needs to be assigned to one of these predefined intents. You can think of this as the domain of the chatbot. Below you can see an example of a list of intents defined and a list user examples per intent.

Typically the user utterance is tagged with one of these intents, even if what the user says, stretches over two or more intents. Most chatbots will take the intent with the highest score and take the conversation down that avenue.

Already here you should see the problem, when an user utters two intents in a sentence. Switch the lights on and turn the music down. Most chatbots will settle on one of the two intents in this sentence.

Our Approach

Intents are defined in most cases by a decimal percentage.

A decimal percentage that represents your assistant’s confidence in the recognized intents. From the example her you can see that meeting intent is 81% and the time intent is 79%. So very close and clearly both need to be addressed.

And in other cases there might be more, yet most conversational environments will take to highest score to address, leaving the user with no other option than to retype the second intent, and hopefully with no other intents this time.

Dialog Configuration For Multiple Intents

There are simple ways of addressing this problem and helping your chatbot to be more resilient. Here I will show you a simple way of achieving this within the IBM Watson Assistant environment.

The Dialog Structure

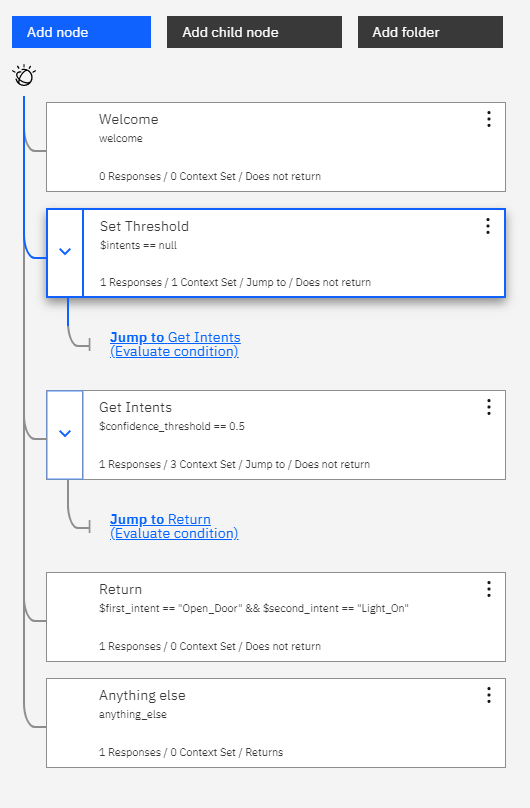

I went with the simplest dialog structure possible to create this example. Here you can see some of the conditions within the image. The idea is for the conversation to skip through the initial dialog nodes and evaluate the conditions.

Watson Assistant’s dialog creation and management web environment is powerful and feature rich. It is continuously evolving with new functionality visible every so often.

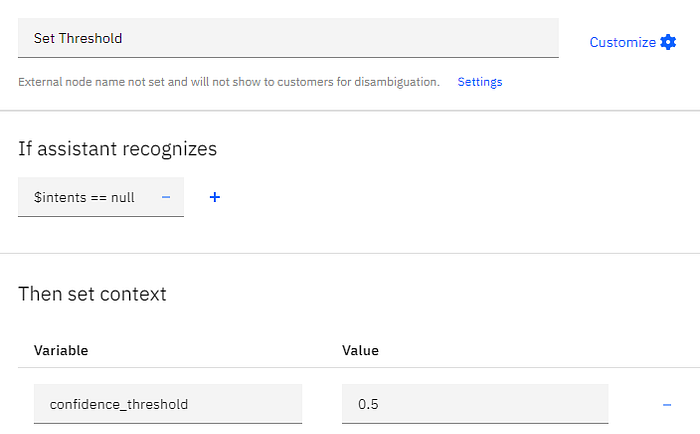

Setting The Threshold

Within the second node we create the contextual variable named $intents and set it to zero. This we will use to capture all the intents gleaned from the user input.

The Intents we capture with this contextual variable later in the dialog will include all the intents. You see we also create a contextual variable called $confidence_threshold. This is set to 0.5. The idea is to discard intents with a confidence lower than 50%. This threshold can be tweaked based on the results you achieve within your application.

In general you will see a clearly segregated top grouping and then the rest.

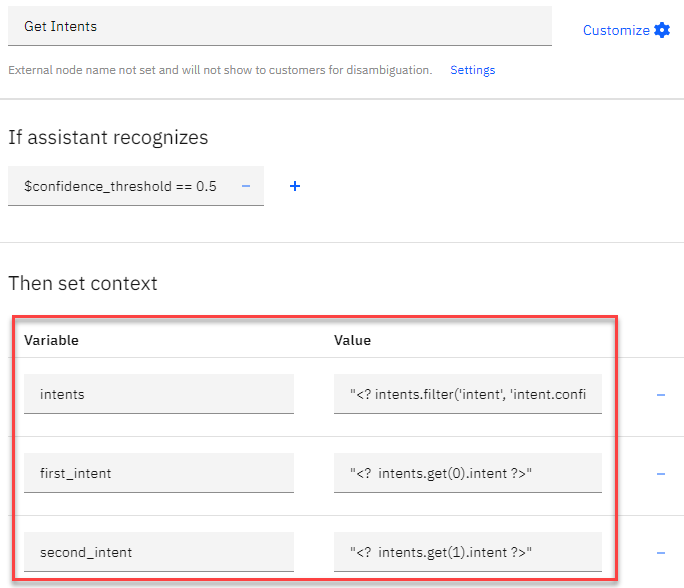

Getting The Intent Values

In the third dialog node we define three more context variables and assign values to them. Firstly we define a variable with the name $intents. Then we use the Value field to enter the following:

“<? intents.filter(‘intent’, ‘intent.confidence >= $confidence_threshold’) ?>”To learn more about expression language methods, take a look at IBM’s documentation. We are only filtering the intents which are equal or more than the confidence threshold we set of 50%.

From here..

We are going to extract only the first two intents, as those are the ones we are interested in. For the first intent we define the variable first_intent and for value we use:

“<? intents.get(0).intent ?>”This extract the first intent value from the list of intents. Then we create a context variable with the value second_intent and we assign the second listed intent value:

“<? intents.get(1).intent ?>”You can see the pattern here, and so you can go down the list. You can also create a loop to go through the list.

Now our values will be captured via context variables within the course of the conversation. These values can now be used to direct the dialog and support decisions on what is presented to the users.

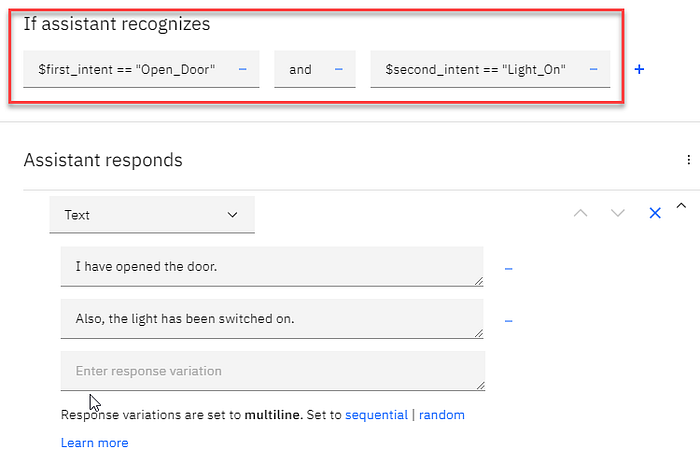

Dialog Decision

This is one example of where we create a condition within a dialog and if it recognizes these two intents, the dialog is visited.

This is a mere illustration in the simplest form possible. For a production environment, the best solution would be to handle the intents separately and not in one dialog. Thus minimizing the options to make provision for.

Testing Our Prototype

Testing our prototype within the test pane shows how with a multi-intent utterance the intents are captured as contextual entities and used within the dialog. Thus allowing the bot to respond accordingly.

Conclusion

There is no magic remedy to make a conversational interface just that; conversational.

It will take time and effort.

But it is important to note that commercially available chatbot solutions should not be seen as a package by which you need to abide. Additional layers can be introduced to advise the user and inform the chatbot’s basic NLU.

A chatbot must be seen within an organization as a Conversational AI interface and the aim is to further the conversation and give the user guidelines to take the conversation forward.

If the user utterances just bounce off the the chatbot and the user needs to figure out how to approach the conversation, without any guidance, the conversation is bound to be abandoned.