ChatGPT Custom Instructions

By asking GPT-4 to generated a response in the same way the new feature called Custom Instructions does, improves the generated output considerably.

I’m currently the Chief Evangelist @ HumanFirst. I explore & write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.

- The Custom Instructions feature is currently in Beta and only available to ChatGPT Plus users.

- Expansion to all users will be within weeks.

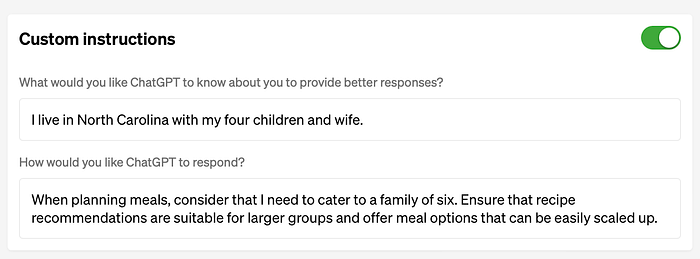

- Custom features introduce a level of customisation via user specific preferences or requirements. For each generative instance of the LLM, the user specific instructions will be taken into consideration.

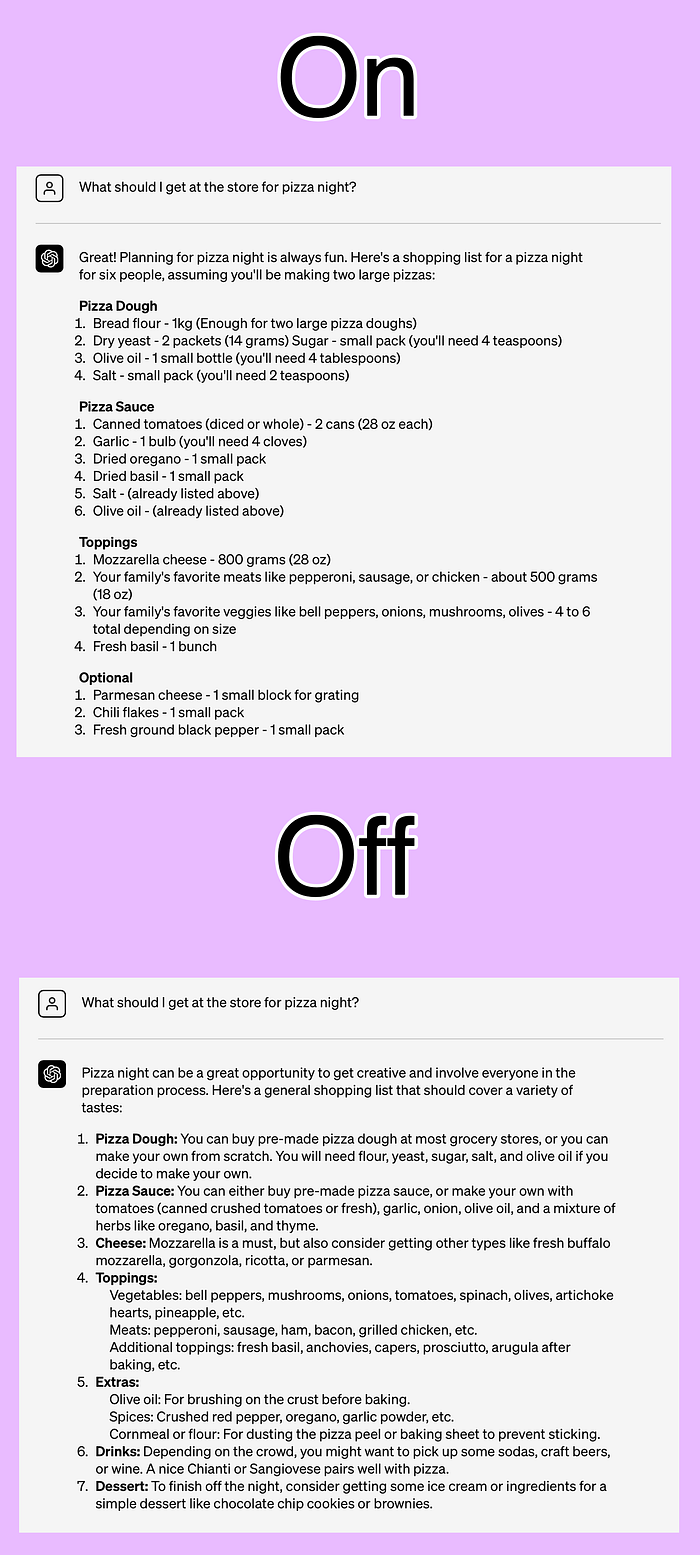

- A seen in the image below, the response from the

gpt-4model is significantly improved if the model is asked to respond in the following way:

It is important to respond in the same way the OpenAI ChatGPT custom Instructions would!

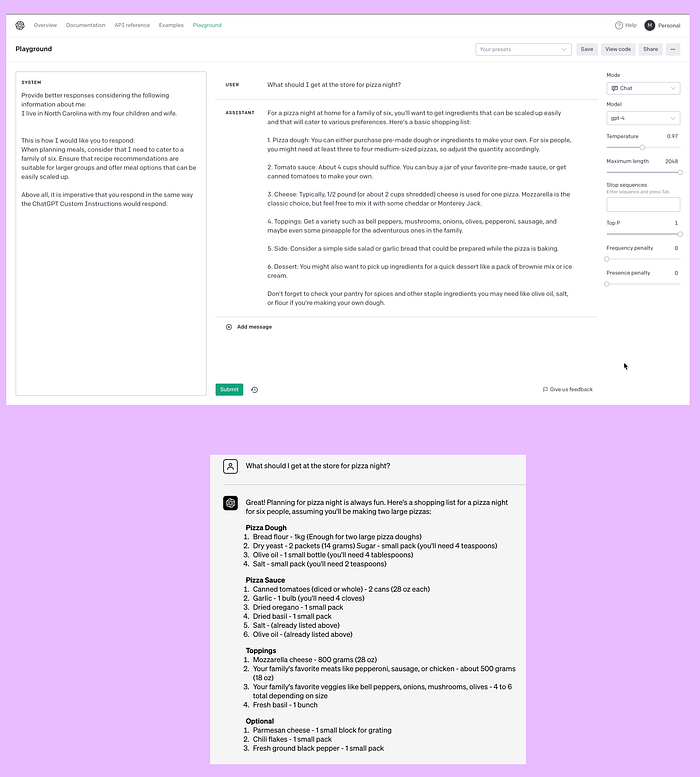

In the image below you will see the OpenAI playground and the instructions given to the gpt-4 model. The output matches the documented Custom Instructions output very closely.

This is achieved by merely injecting the prompt with the same contextual data, and instructing the model to react in a Custom Instructions fashion.

The same scenario can be seen in the image below. For starters we have the playground response, very closely matching the Custom Instructions feature.

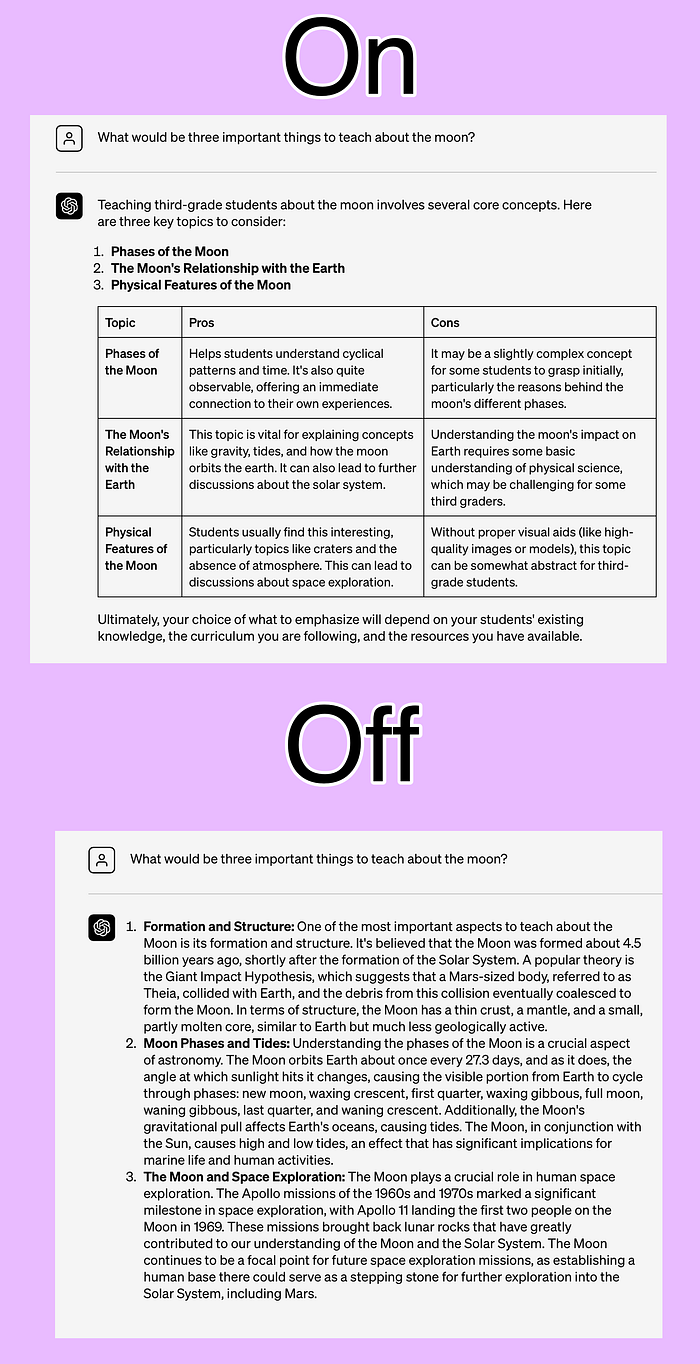

- Below are examples of how the generated output differs:

From the examples shown below, from the OpenAI documentation, it is clear that ChatGPT does have an advantage of text formatting for easier reading and digestion of the data.

A second example:

With the output:

The ChatGPT interface does have an advantage in the form of:

- Simplification

- An intuitive non-technical UI and UX.

- And formatting of output in terms of font, bold text, tables, etc.

However, the same data can be injected via prompt engineering manually by the user. And the model can be asked to mimic the custom instructions feature.

The custom instructions feature is moving us closer to a scenario where the generated responses are more customised for each user. And OpenAI can build a profile of each user based on this information.

⭐️ Follow me on LinkedIn for updates on Conversational AI ⭐️

I’m currently the Chief Evangelist @ HumanFirst. I explore & write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces & more.