Chatbots: Natural Language Generation In 7 Easy Steps

Transform Structured Data Into Unstructured Natural Language

What is Natural Language Generation?

NLG is a software process where structured data is transformed into Natural Conversational Language for output to the user. In other words, structured data is presented in an unstructured manner to the user. Think of NLG is the inverse of NLU.

With NLU we are taking the unstructured conversational input from the user (natural language) and structuring it for our software process. With NLG, we are taking structured data from backend and state machines, and turning this into unstructured data. Conversational output in human language.

Commercial NLG is emerging and forward looking solution providers are looking at incorporating it into their solution. At this stage you might be struggling to get your mind around the practicalities of this. Below you will see one practical example which might help.

The Why

So with machine learning readily available, why should we still manually define the script of our chatbot / conversational systems for certain node or state in our state machine.

There are option to make it more human-like, for instance to define multiple dialogue options per node. And then having the option to present the dialog options in a random or sequential fashion.

Thus creating the illusion of a non-fixed and dynamic dialogue. However, as dynamic as it might seem, still static at its heart; even if it is to a lesser degree.

The How

Using Natural Language Understanding (NLG) of course.

Why can we not take a sample size of a few hundred thousand scripts, and create a Tensorflow model by making use of Google’s Colab environment.

Then, based on key intents, generate a response, or dialog based on the model. Hence, generating Natural Language. Or unstructured output based in structured input. Let’s have a quick look at the basic premise of Natural Language Understanding (NLG) and then one practical Example.

Step-By-Step

Let us start by creating a fake news headline generator. We will generate fake news headlines, from a set of training data, based in a key word; one or more.

Each of these records is a newspaper headline which I used to create a TensforFlow model from. Based in this model, I could then enter one or two intents, and random “fake” (hence non-existing) headlines were generated. There are a host of parameters which can be used to tweak the output used.

First, you need your training data.

Create a free login on here. There is a dataset containing a million news headlines published over a period of 15 Years. I only used 180,000 entries and only the headline_text column.

This is your data.

Go to the Colab notebook.

This notebook consists of 7 Steps…

The note is by default in playground mode. Save a copy to your Google Drive; this is the most convenient. Your changes will also be saved here.

The from here follow the notedbook instructions. Run the first cell, installing gpt-2-simple etc.

Secondly, perform the GPU portion and download GPT-2. Keep in mind, all the commands are there, you need not type any commands in. You can run it as default without any problems.

You need to mount your Google Drive. The notebook takes you through the process, and Google Drive will furnish you with a security string for Colab to consume.

After mounting you can upload your *.text file with your training data. Make sure the path of your file in the Google Drive is the same the notebook is expecting. This is one place where you get get stuck if you don’t pay attention.

After copying it, you can run the training. After the model is trained, you can copy the checkpoint folder to your own Google Drive. This is important for future loading and use.

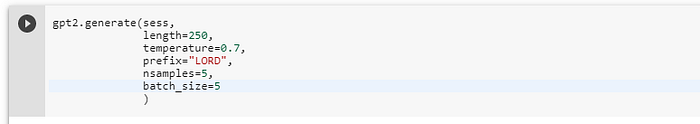

The fun part is running the cell below to create a fake headline based on the prefix defined. Be sure to play around with the other parameters of the command.

The Final Product

Conclusion

For most this might seem too futuristic and risky to place the response to a customer in the hands of a pre-trained model.

However, a practical example where a solution like this can be implemented quite safely is for intent training.

During chatbot design, the team will come up with a list of intents the bot should be able to handle. Then for each of those intents examples of user utterances must be supplied to train the model. It can be daunting coming up with a decent amount of examples.

Should user conversations be available, even from live agent conversations, a TensorFlow model can be created from existing conversations and intents can be passed to the model.

New and Random utterances can be generated per intent or grouping of intents. The output can then be curated by the designers and added to the training model.

Read More Here: