Build Your Own ChatGPT or HuggingChat

By making use of haystack and Open Assistant, you are able to create a HuggingChat or ChatGPT like application.

HuggingChat and ChatGPT (as seen below) are both general chatbot interfaces. Users can have general conversations with the chatbots via a web based GUI. The idea is that both of these services will act as the ultimate personal assistant.

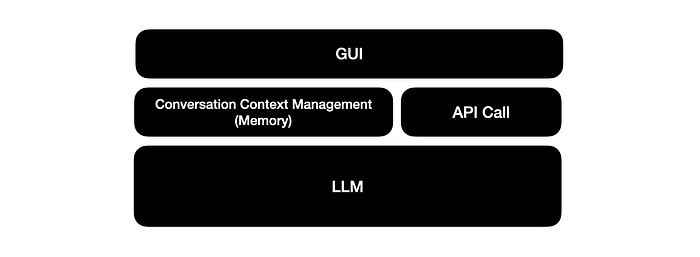

These interfaces are constituted by three key layers:

- Large Language Model

- API Call

- Conversation memory/context management

- Graphic User Interface

As use of these chat interfaces grow, users have a natural desire to develop or build on these interfaces. And the LLMs on which these chat interfaces are built, are now available.

In the case ChatGPT the LLMs used are gpt-4, gpt-3.5-turbo, gpt-4–0214, gpt-3.5-turbo-0301.

In the case of HuggingChat, the current model is OpenAssistant/oasst-sft-6-llama-30b-xor .

But, having API access does not address the requirement for managing conversation context and memory, this will have to be developed.

Read more about managing chatbot memory here:

The integration with memory, enables human-like conversations with Large Language Models (LLMs). Conversation context is managed and users can ask follow-up questions by implicitly referencing conversational context. This element is vital for longer multi-turn conversations.

Haystack recently released a notebook guiding users step-by-step in building your own HuggingChat interface based on the same LLM as HuggingChat is currently using.

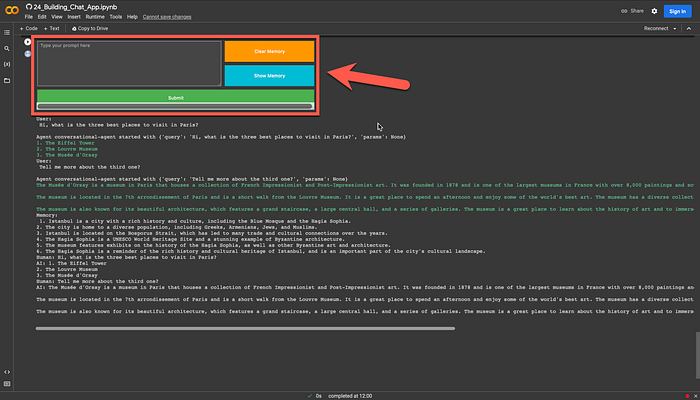

As you can see below, the notebook also generates a GUI through which you can have a conversation with the chatbot, view and clear the memory.

⭐️ Please follow me on LinkedIn for updates on LLMs ⭐️

Here follows a complete guide on how to have your own personal assistant running in a notebook.

Firstly, install haystack:

pip install --upgrade pip

pip install farm-haystack[colab]In order to access HuggingFace’s hosted inference API’s, you need to provide an API key for HuggingFace:

from getpass import getpass

model_api_key = getpass("Enter model provider API key:")A PromptNode is initialised with the model name, api key and max length setting. We will reference the same LLM used by HuggingChat:

OpenAssistant/oasst-sft-1-pythia-12b

from haystack.nodes import PromptNode

model_name = "OpenAssistant/oasst-sft-1-pythia-12b"

prompt_node = PromptNode(model_name, api_key=model_api_key, max_length=256)In order to make the conversational interface more humanlike, memory needs to be created.

There are two types of memory options in Haystack:

- ConversationMemory: stores the conversation history (default).

- ConversationSummaryMemory: stores the conversation history and periodically generates summaries.

from haystack.agents.memory import ConversationSummaryMemory

summary_memory = ConversationSummaryMemory(prompt_node)The ConversationalAgent can now be initialised. As PromptTemplate, ConversationalAgent uses conversational-agent by default.

from haystack.agents.conversational import ConversationalAgent

conversational_agent = ConversationalAgent(prompt_node=prompt_node, memory=summary_memory)The memory use can be illustrated by asking a question, followed by a second question. With the second question implicitly referencing the context of the first question:

Question One:

Tell me three most interesting things about Istanbul, Turkey

Question Two:

Can you elaborate on the second item?

Below you can see how questions can be posted:

conversational_agent.run("Tell me three most interesting things about Istanbul, Turkey")And the follow-up implicit question:

conversational_agent.run("Can you turn this info into a twitter thread?")Take your chat experience to the next level with the example application below…

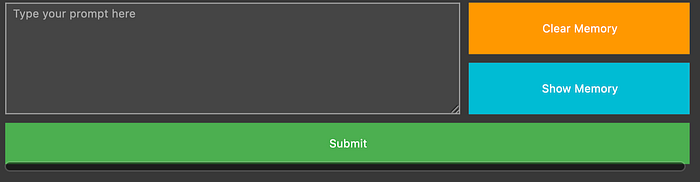

Here is the interactive chat window right within Colab which the code will generate:

Execute the code cell below and use the text area to exchange messages with the conversational agent. Use the buttons on the right to load or delete the chat history.

import ipywidgets as widgets

from IPython.display import clear_output

## Text Input

user_input = widgets.Textarea(

value="",

placeholder="Type your prompt here",

disabled=False,

style={"description_width": "initial"},

layout=widgets.Layout(width="100%", height="100%"),

)

## Submit Button

submit_button = widgets.Button(

description="Submit", button_style="success", layout=widgets.Layout(width="100%", height="80%")

)

def on_button_clicked(b):

user_prompt = user_input.value

user_input.value = ""

print("\nUser:\n", user_prompt)

conversational_agent.run(user_prompt)

submit_button.on_click(on_button_clicked)

## Show Memory Button

memory_button = widgets.Button(

description="Show Memory", button_style="info", layout=widgets.Layout(width="100%", height="100%")

)

def on_memory_button_clicked(b):

memory = conversational_agent.memory.load()

if len(memory):

print("\nMemory:\n", memory)

else:

print("Memory is empty")

memory_button.on_click(on_memory_button_clicked)

## Clear Memory Button

clear_button = widgets.Button(

description="Clear Memory", button_style="warning", layout=widgets.Layout(width="100%", height="100%")

)

def on_clear_button_button_clicked(b):

conversational_agent.memory.clear()

print("\nMemory is cleared\n")

clear_button.on_click(on_clear_button_button_clicked)

## Layout

grid = widgets.GridspecLayout(3, 3, height="200px", width="800px", grid_gap="10px")

grid[0, 2] = clear_button

grid[0:2, 0:2] = user_input

grid[2, 0:] = submit_button

grid[1, 2] = memory_button

display(grid)⭐️ Please follow me on LinkedIn for updates on LLMs ⭐️

I’m currently the Chief Evangelist @ HumanFirst. I explore and write about all things at the intersection of AI and language; ranging from LLMs, Chatbots, Voicebots, Development Frameworks, Data-Centric latent spaces and more.