AI: Create Your Own Virtual Voice Agent

Step-By-Step Guide in Building a Virtual Voice Agent

Introduction

Chatbots are becoming common place and most people have had some exposure to them. Few has taken the next step, which is voice enabling your chatbot. The purpose of this story is to demystify the process of voice enabling your chatbot.

For an indication on where we are heading with this story, watch the video below…

Apart from general functions, voice translation is one of the powerful applications. Where the conversation can be translated in real-time on a phone call. There is a certain mystique to speaking to a bot and have to bot speak back to in voice; especially in a different language.

Chatbot Migration Considerations

There are few elements to always keep in mind when migrating a messaging / text bot (aka chatbot) to a voice bot.

The first is that voice is ephemeral…it has a very short shelf life. Once spoken, it evaporates into the atmosphere.So with text chat, dialogs can be longer, and multiple dialogs can be sent at any given point in the text conversation. This is not a practical approach in a voice conversation. For a voice conversation, the dialog needs to be single turn, and also shorter text bodies. The dialog cannot be overloaded with detail which the user needs to remember.

Secondly, a text message is essentially a asynchronous medium. The user can review and reply at their leisure. Hence messaging speaks to our multi-threaded asynchronous digital habits. With voice the conversation is single-thread and synchronous. There is a total demand on the users attention. Again, this will only work for shorter conversations with less dialog turns.

Thirdly, users prefer to perform transactions (buying, banking etc.) via messaging and not voice. Once of the reasons might be that messaging offers a reference, a paper trial of sorts. Where with voice, this does not exist. One way to circumnavigate this problem is sending a text/SMS messaging confirming the transaction. Or affording the user a facility where they can find a transcript of their voice conversation. Alternatively, breaking out from the voice conversation to a different medium.

Lastly, with messaging, silence caused by lags are tolerable. Lags might be caused by slow API response times, a system that is geographically dispersed and so on. With any voice conversation, like is the case with traditional IVR, silence is deadly. Any response time should ideally be under a second.

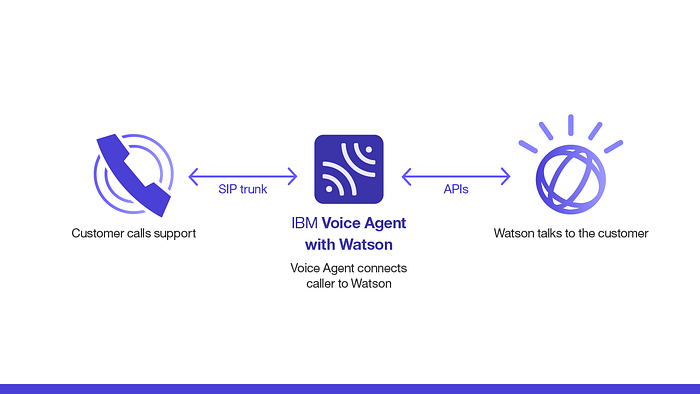

We choose our Tools

In order to achieve our goal, we need to choose our tools for the job. The customer will make a call, the number dialed routes to a SIP gateway. The SIP gateway in turn is connected to the IBM Voice Agent with Watson. The voice agent connects to Watson which talks to the customer. Or in this case, you!

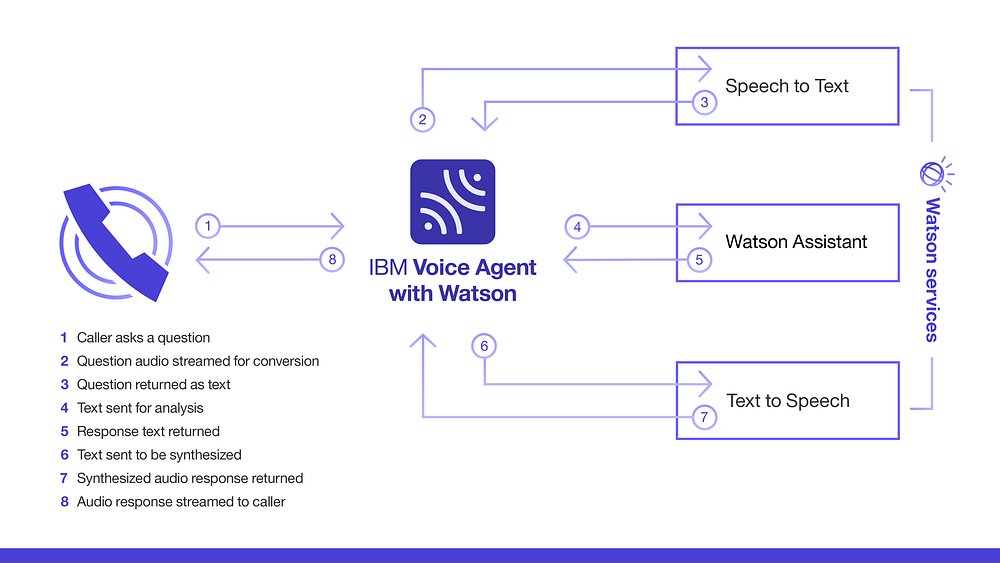

Below is the building blocks of the solution. The caller says something (step 1), this voice utterances is transported via the SIP gateway to the IBM Voice Agent. The speech (step 2) is then converted to text (step 3) and the text in turn sent to (step 4) sent to Watson Assistant.

Watson Assistant contains all the elements which constitutes the chatbot. These elements are:

* Intents

* Entities

* Dialog Flow with Scripts

* Translation & Sentiment API’s can be invoked from here

The text output from Watson Assistant (step 5) is sent to the Text to Speech engine (step 6) and from here via the Voice Agent (step 7) to the caller (step 8).

The Voice Agent component is the facilitator between the different elements.

Supported Languages

For a language to be supported, it must be supported by all Watson services that you configure in your voice agent. Using the Speech to Text, Text to Speech, and Watson Assistant services, the following languages are supported:

- Brazilian Portuguese

- French (Speech to Text broadband only)

- German (Speech to Text broadband only)

- Japanese

- Spanish

- UK English

- US English

Creating the Voice Agent

When creating a voice agent, Watson Voice Agent automatically searches for any available Watson service instances you can use in creating your voice agent. This is a very useful feature and can save you cost.

Certain plans also allows for a certain amount of instances, and reusing existing instances of TTS, STT etc. can save resources. If no service instance is available, you can create one along with the voice agent or connect to services in a different IBM Cloud account. It is also possible to use other could elements like Google Speech to Text, or Google Text to Speech instance.

On your dashboard, go to the Voice agents tab and click Create a Voice Agent.

Select Voice when you need to choose the agent type.

For Name, specify a unique name for your voice agent. As you might have a list of services, choose a descriptive name. Also consider adding the region in which your services are. This will come in handy later. The name can be up to 64 characters.

For Phone number, add the number from your SIP trunk, including the country and area codes. The phone number can have a maximum of 30 characters, including spaces and + ( ) - characters. I successfully set up a South African number using Twilio. Choosing a local number based on your location saves money when it comes to demo time; and also while testing from a phone.

You can add multiple numbers to one Voice Agent by clicking Manage, next to Phone Number.

To enable call transfer, enter the termination URI for your Default transfer target.

Under Conversation, configure the connection to your Watson Assistant service instance by clicking Location 1 or Location 2 and enabling the location that you selected. You can use Watson Assistant service instances in IBM Cloud accounts that you or someone else owns. You can also connect to any of these options through a service orchestration engine.

The Watson Assistant portion of you solution holds the logic, dialog, intents and entities. Most of your programming, logic and general Voice Agent behavior is determined here.

Under Text to Speech, review the default configuration for your Text to Speech service instance by clicking Location 1 or Location 2 and enabling that location. You can customize your configuration with the following.

Find more here…